The Final Recrawl Analysis: A Powerful As Well As Vital Last Action After Implementing Large SEO Modifications

Habazar Web Marketing and Social Media Campaign Management

The final recrawl analysis: A powerful and also important last action after carrying out massive SEO modifications

When aiding firms deal with performance drops from significant algorithm updates, internet site redesigns, CMS migrations and also various other disruptions in the Search Engine Optimization pressure, I find myself crawling a whole lot of Links. Which usually consists of a number of crawls during a customer engagement. For larger-scale sites, it's not uncommon for me to emerge many troubles when assessing crawl information, from technological SEO concerns to material top quality issues to customer interaction obstacles.

After surfacing those issues, it's extremely crucial to develop a removal plan that tackles those problems, remedies the troubles and enhances the high quality of the web site generally. If not, a site may not recover from a formula upgrade hit, it could being in the grey location of quality, technological issues could sit festering, and also much more.

As Google's John Mueller has actually clarified a number of times about recovering from quality updates, Google intends to see substantial renovation in high quality, and also over the long-term. So basically, fix all of your problems — and also then you could see positive motion down the line.

Creeping: Venture versus medical

When digging right into a website, you normally intend to get a feeling for the site total initially, which would consist of an enterprise crawl (a larger crawl that covers enough of a website for you to obtain a good amount of Search Engine Optimization knowledge). That does not indicate crawling an entire website. As an example, if a site has 1 million web pages indexed, you might start with a crawl of 200-300K pages.

Here are numerous initial enterprise creeps I have performed, ranging from 250K to 440K URLs.

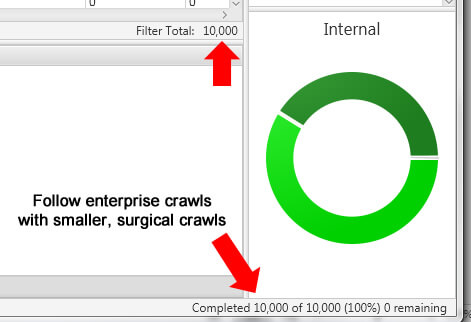

Based upon the first crawl, you might after that launch numerous surgical crawls concentrated on certain areas of the site. For example, see a great deal of thin material in X area of a site? After that focus the next crawl just on that area. You may crawl 25-50K URLs or even more in that location alone to obtain a far better feel for what's going on there.

When it's all said and done, you could release a number of surgical creeps throughout an involvement to concentrate your focus on problems in those details areas. For example, here's a smaller, surgical crawl of just 10K Links (concentrated on a certain area of a web site).

All of the crawls help you recognize as several troubles on the website as feasible. Then it's up to you as well as your customer's group (a combination of online marketers, task managers, developers, as well as designers) to carry out the adjustments that need to be finished.

Next up: Auditing hosting– remarkable, yet not the last mile

When aiding clients, I generally get access to a hosting atmosphere so I could examine modifications prior to they struck the manufacturing site. That's a wonderful method in order to nip issues in the bud. Unfortunately, there are times that changes which are improperly applied can cause more problems. For example, if a programmer misunderstood a topic and also carried out the wrong modification, you could finish up with more troubles than when you started.

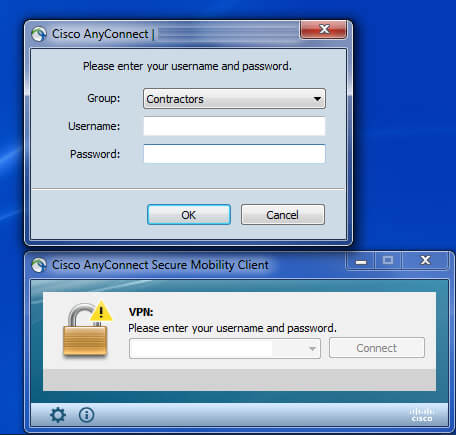

You absolutely wish to make certain all changes being applied are appropriate, or you can finish up in worse shape than prior to the audit. One method to crawl hosting when it's not openly offered is to have VPN gain access to. I covered that in a previous post about ways to crawl a staging server prior to adjustments get pushed to manufacturing.

Yet here's snag. We're now discussing the staging atmosphere as well as not production. There are times changes get pushed to production from staging as well as something fails. Maybe regulations get mishandled, a code glitch breaks meta data, website style obtains affected which also affects functionality, mobile URLs are adversely affected, and more etc.

Therefore, you definitely intend to examine changes in staging, however you absolutely want to double check those adjustments once they go stay in production. I cannot inform you how lots of times I've inspected the production site after changes get pushed real-time and discovered troubles. In some cases they are tiny, yet in some cases they aren't so tiny. But if you catch them when they initially roll out, you could wipe out those problems before they can cause lasting damages.

The factor I bring all of this up is since it's critically important to examine modifications all along the path to production, as well as then undoubtedly once changes hit production. Which consists of recrawling the website (or sections) where the changes have gone online. Allow's speak a lot more concerning the recrawl.

The recrawl evaluation as well as comparing adjustments

Now, you may be claiming that Glenn is chatting about a great deal of work here … well, yes and also no. Thankfully, several of the top crawling tools enable you to contrast crawls. Which could help you conserve a great deal of time with the recrawl evaluation.

I've pointed out two of my favorite crawling tools often times previously, which are DeepCrawl as well as Screaming Frog. (Please note: I'm on the consumer consultatory board for DeepCrawl as well as have actually been for a variety of years.) Both are outstanding crawling devices that offer a considerable amount of capability and also coverage. I typically state that when making use of both DeepCrawl and also Shouting Frog for auditing sites, 1 +1= 3. DeepCrawl is powerful for enterprise crawls, while Shouting Frog is superior for surgical crawls.

Credit history: GIPHY

DeepCrawl as well as Screaming Frog are incredible, yet there's a newcomer, and his name is Sitebulb. I've simply started utilizing Sitebulb, as well as I'm excavating it. I would definitely take a look at Sitebulb and also offer it a shot. It's just one more tool that could complement DeepCrawl and also Screaming Frog.

Contrasting adjustments in each device

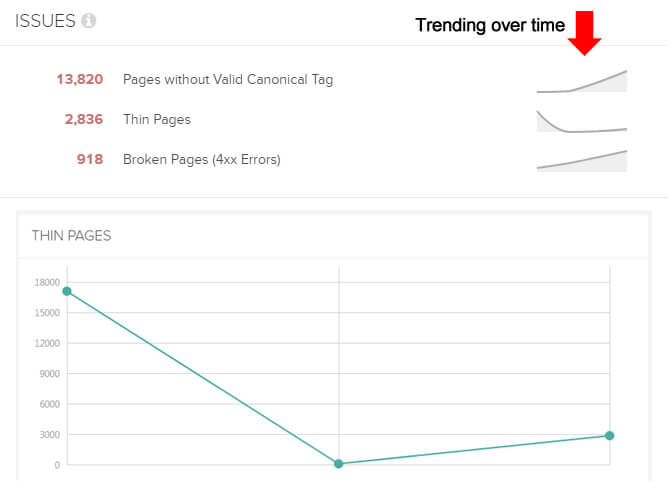

When you recrawl a site using DeepCrawl, it instantly tracks changes between the last crawl and the present crawl (while offering trending across all crawls). That's a huge assistance for comparing issues that were appeared in previous creeps. You'll likewise see trending of each problem with time (if you do greater than just 2 crawls).

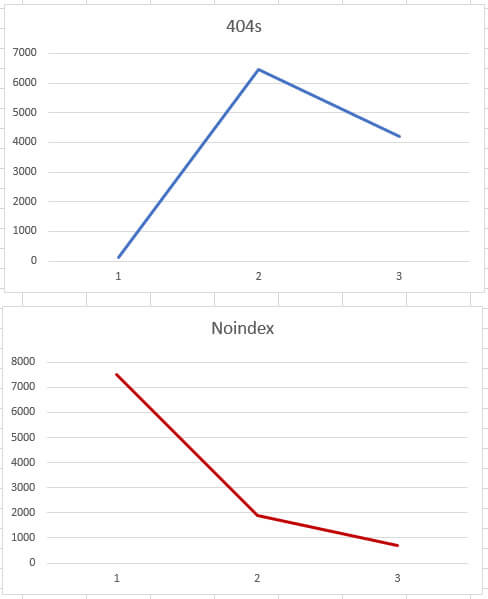

Screaming Frog does not supply contrast functionality natively, yet you can export problems from the tool to Excel. Then you could compare reporting to check the modifications. As an example, did 404s drop from 15K to 3K? Did excessively lengthy titles drop from 45K to 10K? Did web pages noindexed properly raise to 125K from 0? (And so on as well as so forth.) You can create your own graphes in Excel very easily.

And also now comes the young punk called Sitebulb. You'll more than happy to understand that Sitebulb offers the ability to compare creeps natively. You can click any one of the reports and check changes gradually. Sitebulb keeps track of all creeps for your task as well as records adjustments with time each classification. Incredible.

As you can see, the right devices can enhance your effectiveness while crawling as well as recrawling sites. After problems have actually been surfaced, a remediation plan produced, adjustments applied, adjustments inspected in hosting, then the updates pressed to manufacturing, a last recrawl is seriously crucial.

Having the capability to compare changes between crawls can assist you determine any modifications that typically aren't completed correctly or that require more improvement. And Also for Shrieking Frog, you can export to Excel and contrast manually.

Currently let's discuss just what you could discover during a recrawl analysis.

Pulled from manufacturing: Actual instances of what you can find throughout a recrawl analysis

After adjustments get pressed to manufacturing, you're totally subjected SEO-wise. Googlebot will unquestionably start crawling those changes soon (for better or for even worse).

To price estimate Forrest Gump, “Life is like a box of delicious chocolates, you never ever know just what you're gon na get.” Well, extensive creeps coincide means. There are numerous potential issues that can be injected into a site when adjustments go live (especially on facility, large-scale websites). You could be stunned what you discover.

Listed below, I've provided genuine issues I have actually surfaced throughout numerous recrawls of production while aiding clients over the years. These bullets are not fictional. They in fact happened as well as were pressed to manufacturing by accident (the CMS created troubles, the dev team pressed something by accident, there was a code problem and so forth).

Murphy's Law– the concept that anything that can go wrong will fail– is real in Search Engine Optimization, which is why it's seriously crucial to check all changes after they go online.

Remember, the objective was to deal with issues, not add new ones The good news is, I got the problems quickly, sent them to every dev team, and removed them from the formula.

- Canonicals were completely removed from the website when the modifications were pressed live (the website had 1.5 M web pages indexed).

- The meta robotics tag making use of noindex was inaccurately released in numerous areas of the website by the CMS. As well as those added sections drove a substantial quantity of natural search web traffic.

- On the other side, in an effort to enhance the mobile Links on the site, countless blank or nearly blank pages were published to the site (but just available by mobile devices). So, there was a shot of thin web content, which was undetectable to nude eye.

- The wrong robots.txt documents was released and countless URLs that shouldn't be crept, were being crept.

- Sitemaps were botched and were not upgrading correctly. And that consisted of the Google News sitemap. As well as Google Information owned a great deal of web traffic for the site.

- Hreflang tags were stripped out by accident. As well as there were 65K URLs containing hreflang tags targeting several nations per cluster.

- A code glitch pushed double the amount of ads above the fold. So where you had one aggravating ad taking up a substantial quantity of room, the site now had 2 Customers needed to scroll heavily to obtain to the major content (not good from a mathematical point ofview, an usability viewpoint, or from a Chrome actions perspective).

- Hyperlinks that have actually been nofollowed for several years were unexpectedly followed once more.

- Navigation adjustments were really freezing menus on the site. Individuals could not access any kind of drop-down food selection on the site till the problem was dealt with.

- The code taking care of pagination damaged and rel next/prev as well as rel canonical just weren't established appropriately any longer. And also the site consists of countless pages of pagination across several groups as well as subcategories.

- The AMP configuration was damaged, as well as each web page with an AMP alternative really did not include the appropriate amphtml code. And also rel approved was gotten rid of from the AMP pages as component of the same bug.

- Title tags were boosted in vital areas, but html code was added by mishap to a portion of those titles. The html code began damaging the title tags, leading to titles that were 800+ characters long.

- A code glitch added extra subdirectories to each web link on a page, which all caused empty pages. As well as on those pages, more directory sites were included to each web link in the navigation. This produced the excellent tornado of unrestricted URLs being abounded slim material (unlimited spaces).

I assume you get the image. This is why examining hosting alone is not excellent enough. You should recrawl the production website as changes go real-time to ensure those adjustments are applied appropriately. Once again, the issues provided above were emerged and also corrected rapidly. But if the site had not been crawled again after the adjustments went live, then they might have triggered huge troubles.

Overcoming Murphy's Regulation for SEO

We do not stay in an excellent globe. Nobody is attempting sabotage the site when pressing adjustments live. It's simply that servicing big and complicated websites leaves the door open up to tiny insects that could cause big issues. A recrawl of the adjustments you led can nip those problems in the bud. As well as that could conserve the day SEO-wise.

For those of you already running a last recrawl analysis, that's incredible. For those of you trusting that your recommended adjustments obtain pressed to production appropriately, read the list of real problems I uncovered throughout a recrawl analysis once more. Then see to it to include a recrawl evaluation into your following project. That's the “last mile.”